Duplicacy init sftp example4/19/2023

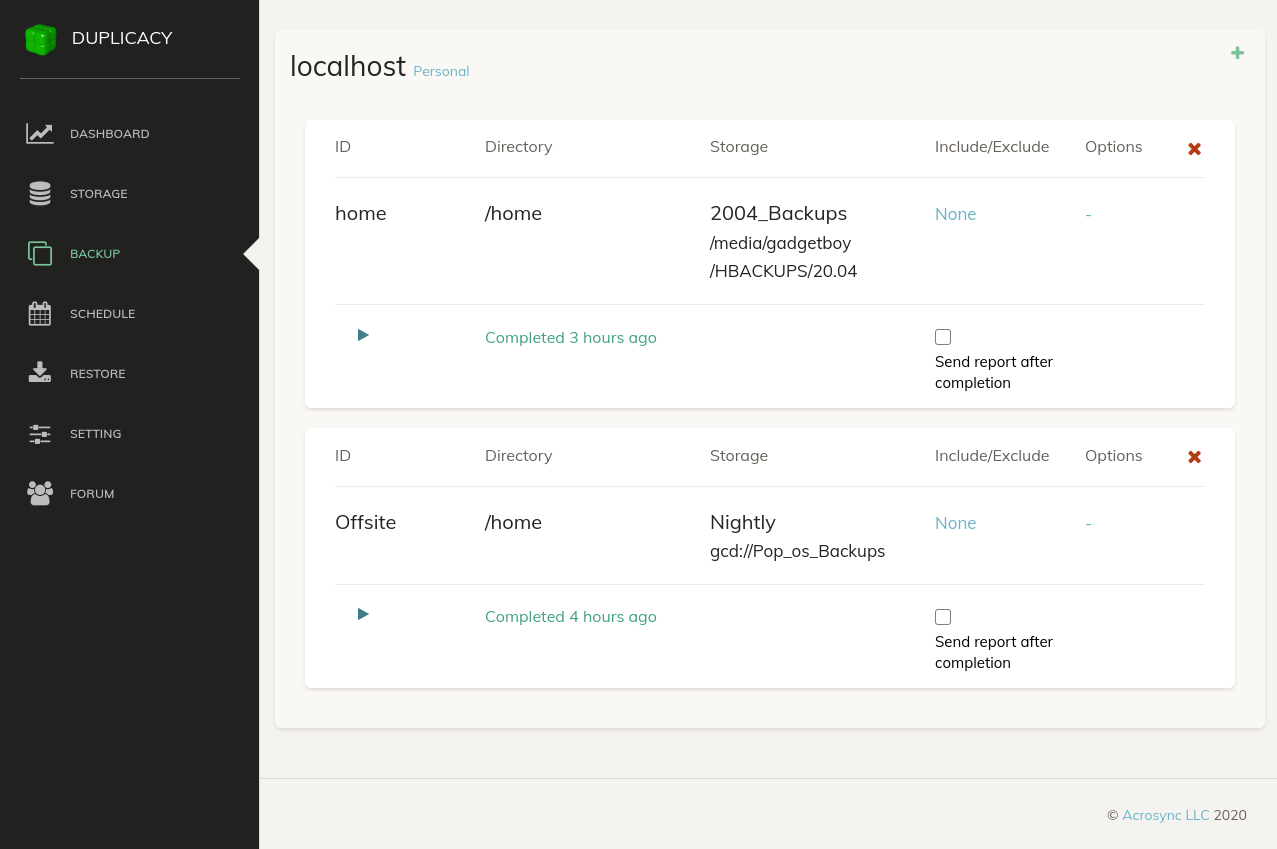

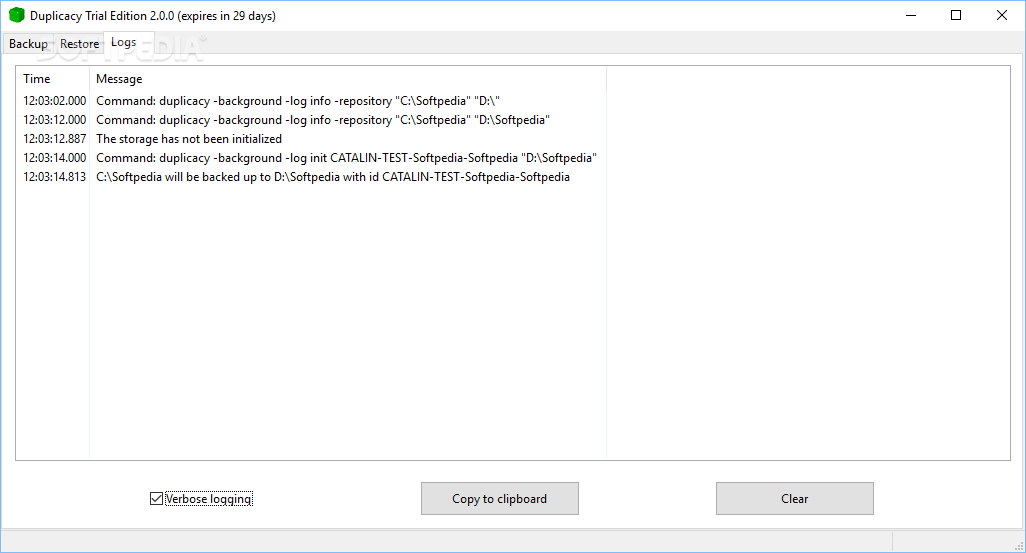

Via sftp, restic attempts to set file permissions to 0700, which doesn't work so well if sftp is set up with separate accounts either. It allows for partitioning users away from each other, but at the cost of needing yet another set of credentials to juggle. Borg Backup is very dependent upon a local cache (meaning that system restores get uglier) and has very limited support for transfer protocols, and Duplicacy has a weird license, but both could potentially work as well, particularly if either a local backup is kept or a transfer protocol other than sftp is used (in the case of Duplicacy).įor handling cloud storage, I've set up access to restic via its Rest Server, so that all files are owned by the user the daemon runs as (which neatly bypasses a lot of the permissions issues). This problem does go away if a local backup is also kept, however. Borg Backup and Duplicacy could be options, but hit problems when attempting to secure clients from each other, since setting up per-user sftp chroot jails on top of rclone mount has its own security issues (that of needing to grant the container CAP_SYS_ADMIN, which is. Some of them are designed to fundamentally work against tape drives and not disk drives, leading to other issues (Bacula, Bareos, BURP). Several don't play nice with rclone mount due to symlinks/hardlinks (BackupPC, UrBackup) or file permissions (restic via sftp), and many rely on the server for encryption, meaning that compromising the server means that all data is compromised (BackupPC, UrBackup). I found that, after searching through several different options, the one that worked best for me is restic.

These have an impact on the options for backup software. 0-byte files can be deleted, but cannot be edited.

There is no file ownership - similar to SMB, all files are owned by a single user.things that don't work) about this approach: The mount script assumes that there's something in cloud storage (otherwise loops waiting for something), so you may need to mount it by hand and populate it with something first to have the systemd approach work as expected. Installing it is as simple as you would expect: Using rclone, it's possible to mount different cloud storage providers, including OneDrive. Storageīut how to get considerably more storage? In my case, I started using Microsoft 365, so would it be possible to mount a OneDrive drive in Linux? As it turns out: yes, albeit with caveats. Having access to considerably more storage allows for a single system to perform both, while still being secure. Not being able to see the names of files being restored can be quite painful. Why skip the local backup? Well, because the previous method, although secure, doesn't lend itself well to restores, since separate systems handle the backups versus the encryption As a result, to be able to restore a file, I would need to know the "when" of the file, then restore the entire backup for that system at that time, then mount that backup to be able to find the file, rather than being able to grab the one file I want. This time through, I decided I wasn't going to worry quite as much about the onsite backup (shame on me) and decided to back straight up into the cloud. As you can imagine, an unreliable backup system isn't actually a backup system, so I went hunting for something else. So, attempting to recreate the database, it ended up in a stalled state for longer than the backup itself took, similar to this issue.

I hit something similar to this issue (Unexpected difference in fileset version X: found Y entries, but expected Z), but on a fresh backup, even after repeated attempts. I'd thought that Duplicati was going to serve my needs well, but it turns out that, as typical, things are a bit more.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed